When Bot Traffic Suddenly Multiplies by a Thousand

This morning, one of our customers experienced intermittent outages. Not a full breakdown, more like this: everything works… until it suddenly doesn't.

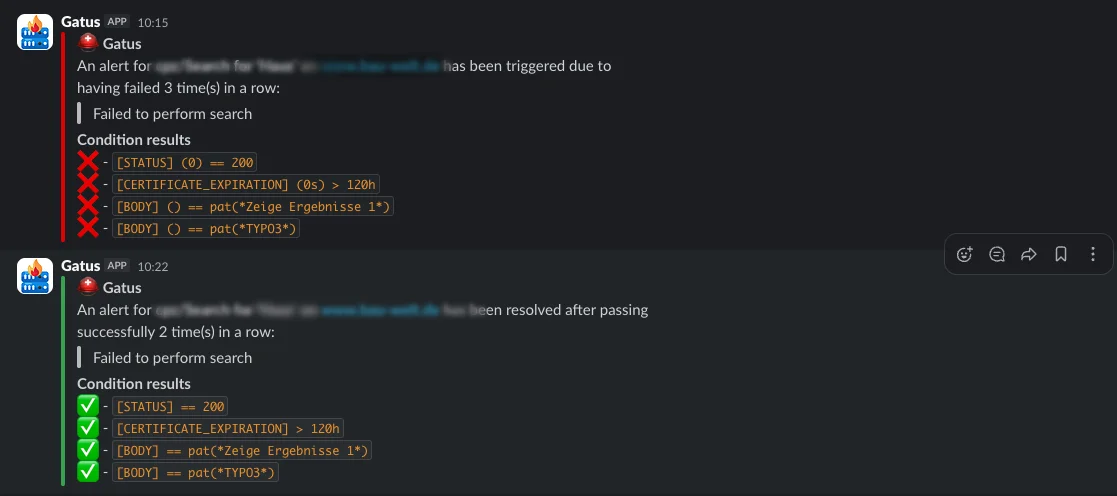

We didn't hear about it from users first. Instead, our monitoring flagged repeated issues in Slack.

We use Gatus to continuously check key website functions, including search. The monitoring runs externally, deliberately independent from the customer's infrastructure. That way, we catch issues that might not be immediately visible from inside the system.

The Analysis: A Surge in Requests, but No Obvious Culprit

After a quick system check, we turned to the logs. All logs are aggregated in a central Graylog instance, where we can analyze them efficiently.

In this case, we focused on request logs from Amazon CloudFront, which sits in front of the application as a CDN.

The initial finding was clear: a significant spike in incoming requests.

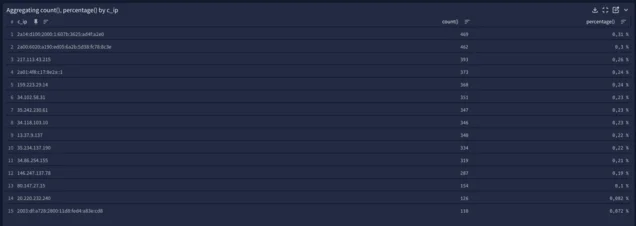

Usually, the root cause becomes obvious quickly. Often it is a small number of IP addresses generating a large share of traffic. This time, it was different.

- No obvious clustering of individual IPs

- But a strong concentration on a specific URL

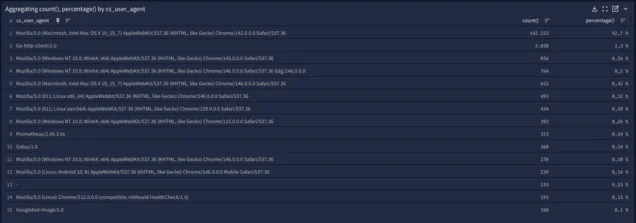

- And a noticeable pattern in the user agent: a slightly outdated Chrome version

That gave us a much clearer picture.

Instead of the usual 1 to 2 requests per minute to the configurator, we suddenly saw close to 2,000 requests per minute.

Too much for the system — especially since these responses are generated dynamically and are not cached.

The Real Pattern: Distributed, but Coordinated

Digging deeper revealed a familiar pattern:

- 3 to 6 requests per IP address

- Then a switch to a new IP

- Same user agent across requests

This is where traditional protection mechanisms start to break down. Rate limiting, for example, becomes far less effective.

Side note: a setup like this does not care about robots.txt. That file is only a suggestion, not a protection mechanism.

Whether this was a targeted attack or simply a poorly configured bot is hard to say. In practice, it doesn't matter. The impact on the system is the same.

The Key Difference: Combinations Instead of Targeted Access

What we observed here reflects a broader shift in how automated systems interact with websites. This wasn't a typical search scenario. The affected area was a faceted interface, where results can be filtered and refined using multiple parameters.

The bot wasn't querying specific content. It was systematically exploring combinations. Parameters on, parameters off, values changing — all of it happening at high speed.

For the system, this means:

- Every request is potentially unique

- Very little reuse of results

- Caching becomes ineffective due to the sheer number of variations

Traditional crawling tends to be linear. This pattern is combinatorial. And combinatorics scale fast.

What takes a human a few clicks turns into thousands of variations per minute when automated.

Why This Becomes Critical

At first glance, these requests look completely harmless:

- They are valid requests

- They use realistic parameters

- They don't trigger obvious errors

- Each individual client generates only a small number of requests

But in aggregate, they create exactly the kind of load that pushes systems to their limits. Not because individual requests are expensive, but because there are too many different requests hitting at the same time.

Or put differently:

The problem is not the single request. It is the combination of variety and speed.

The Solution: Challenges Instead of Blocking

Simply blocking traffic was not a viable option. With constantly rotating IP addresses, we would either react too late or risk blocking legitimate users.

Instead, we applied controls where they are most effective: using AWS WAF, we introduced a challenge mechanism specifically for the configurator.

The idea is simple: real users pass without friction, automated systems typically fail the challenge. This allowed us to reduce the load significantly without affecting legitimate usage.

The effect was immediate. Request volume dropped, and the system stabilized.

Takeaway

Traffic patterns from bots and AI systems are no longer theoretical. They are part of everyday operations.

It is no longer a single IP generating high load. It is many IPs generating small amounts of traffic, but in a coordinated way.

To the system, this looks like normal usage. In reality, it is the opposite.

Please feel free to share this article.

Comments

No comments yet.